Welcome to my blog! I’m going to use this place to write about progress (and setbacks – refer to the site tagline!) on my electronics projects. If any of these projects look interesting to you and you’d like to replicate them, you may certainly use my posts and open-source hardware files as a guide, but I can’t guarantee success!

The tagline refers to the fact that while, yes, I do make quite a lot of mistakes, this is a natural part of designing good products. Of course that doesn’t mean one should go out of their way to intentionally “mess up,” but rather that one should be accepting of the mistakes when they rear their heads and try to learn from them. When I first started working on electronics, my “mistakes” (connecting things up wrong on my first PCB, blowing up chips, you name it…) often left me very discouraged. Now, however, I attempt to “enjoy” the blunders (as much as possible), laugh them off, and try not to do the same thing next time (and then try not to get too disappointed in myself when I do!)

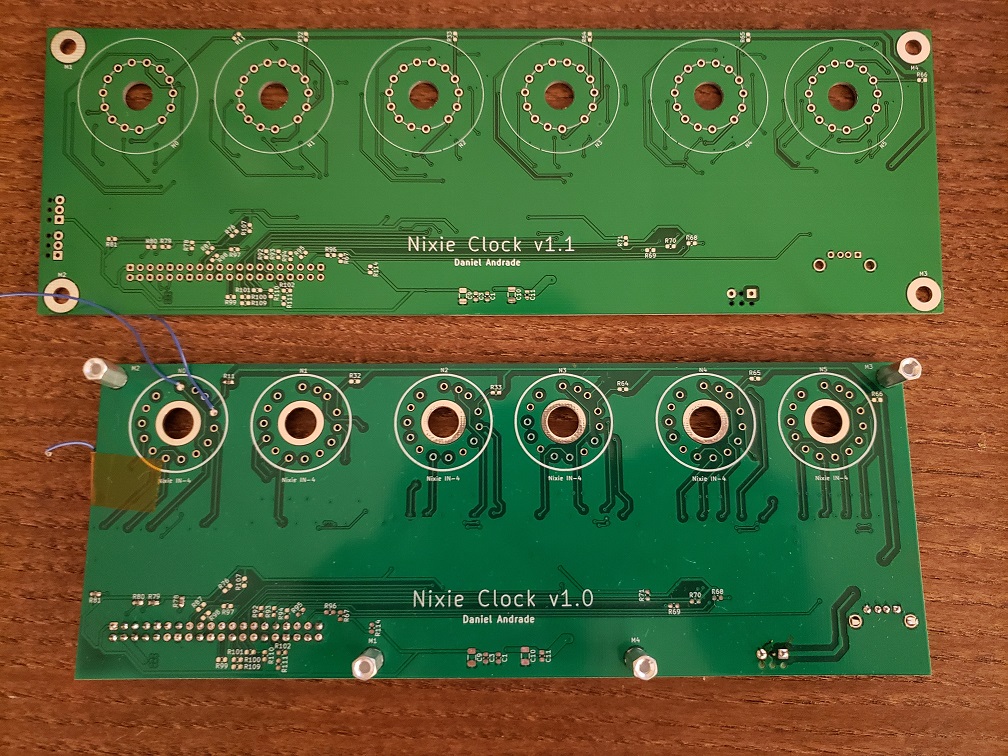

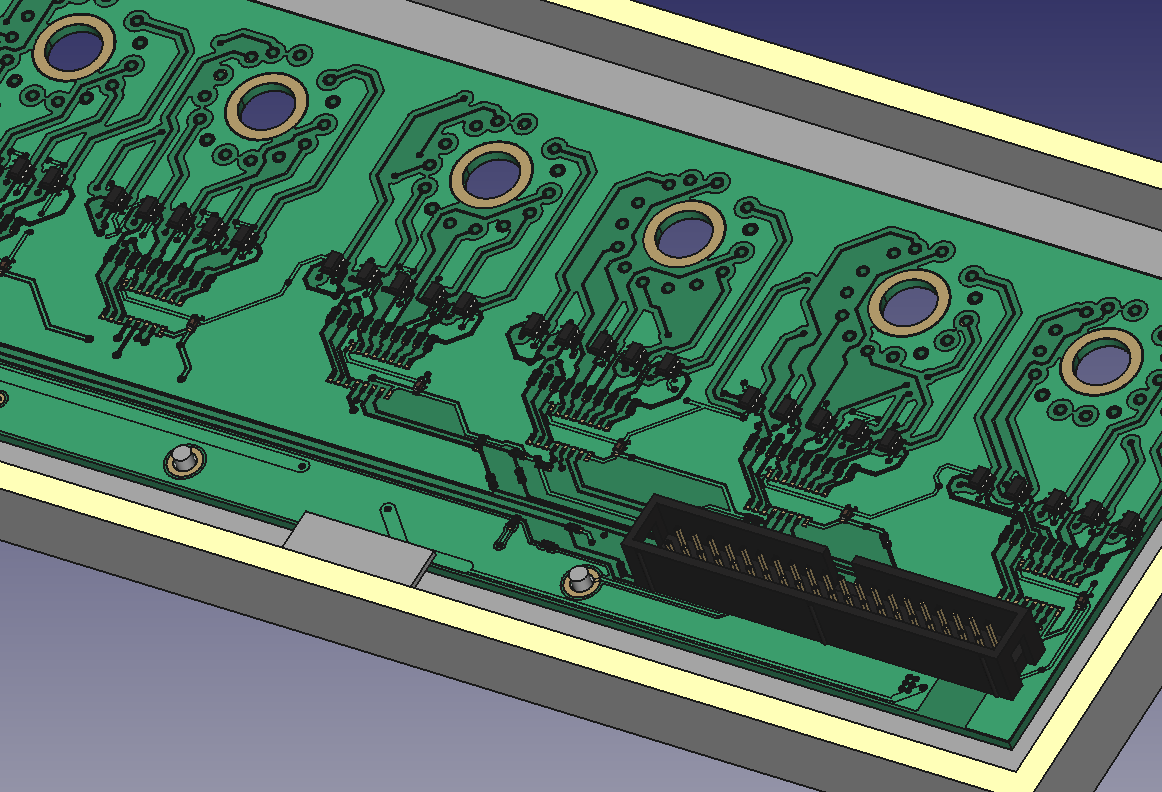

I’m not entirely sure what to do with this home page yet, so for now I’m just going to link to all the relevant places on this blog and post a gallery of some of my favorite project pictures. Feel free to go through the archives and categories below or start with the Projects or Recent Posts pages.

Post Categories

- Projects (12)

- Nixie (7)

- Oscilloscope (5)

Archives

- November 2020 (1)

- October 2020 (3)

- September 2020 (3)

- August 2020 (4)

- July 2020 (1)